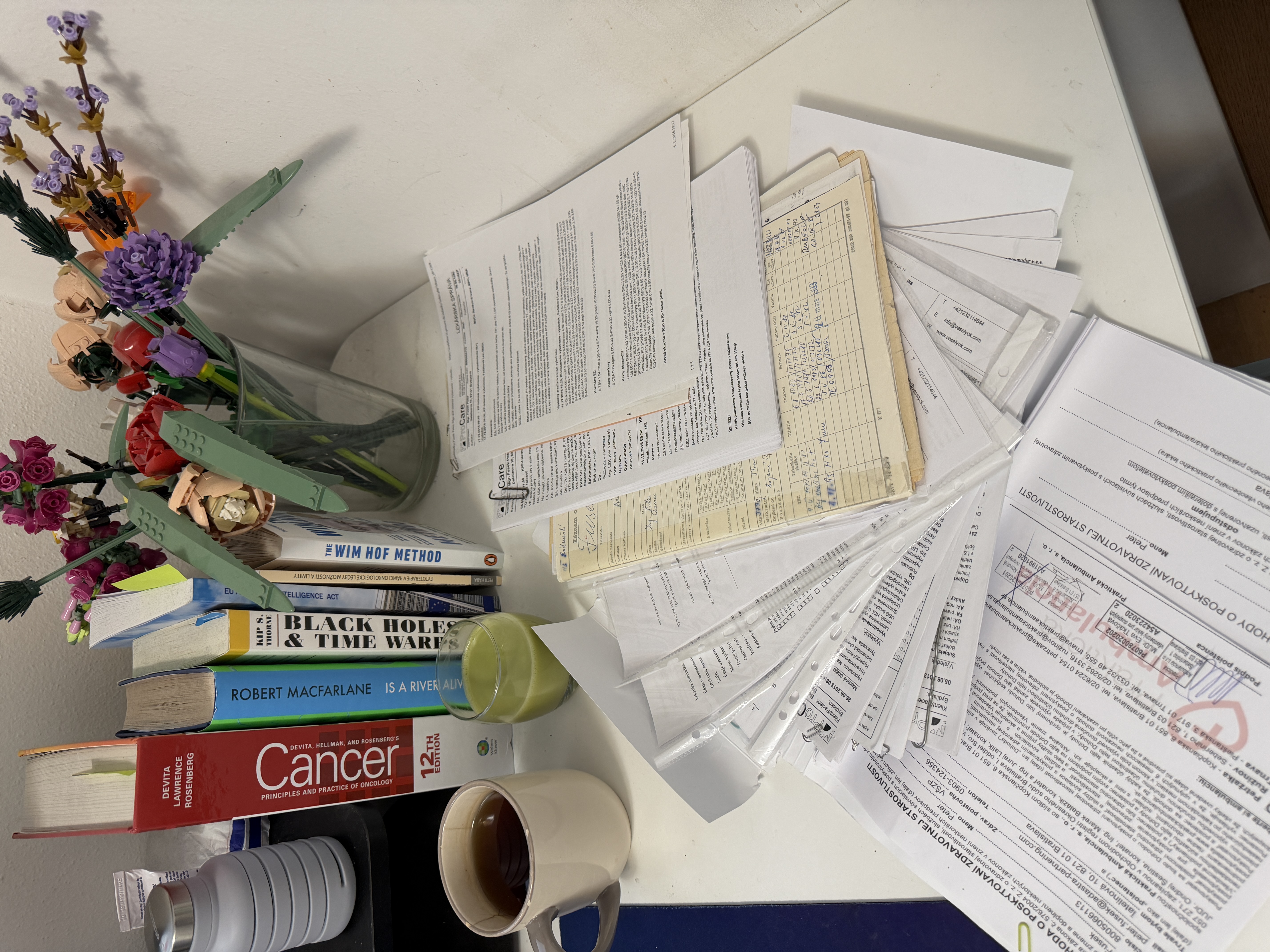

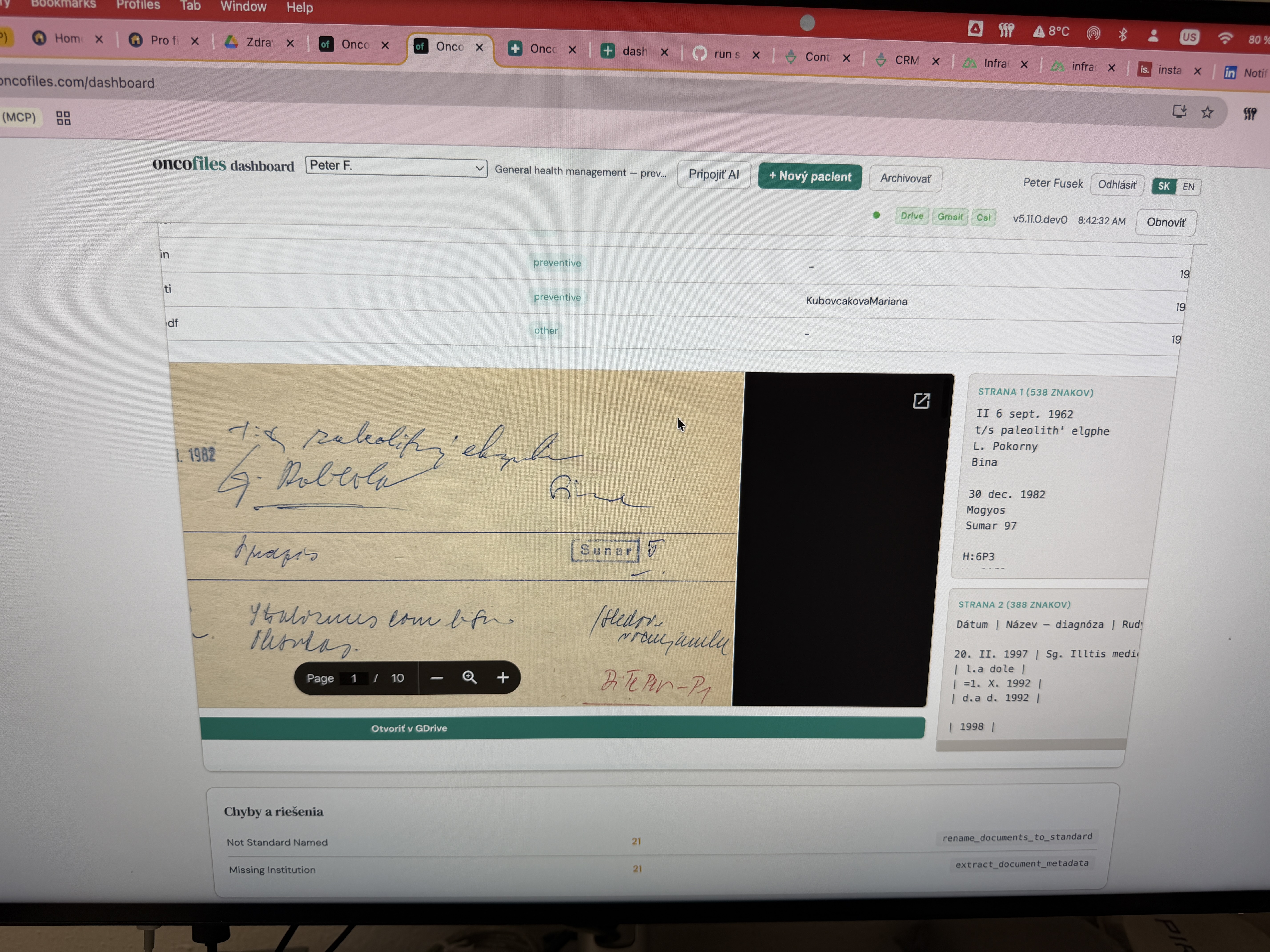

Data & Storage

Where is my data stored?

All your medical documents stay in your own Google Drive. Oncoteam reads and analyzes them, but never copies or stores originals on any external server. You can revoke access anytime from your Google account settings.

Can I run Oncofiles on my own server?

Yes. Oncofiles is open-source — you can self-host it on your own infrastructure for maximum control. Alternatively, you can use the managed instance we operate on Railway (EU-available infrastructure, SOC 2 Type II certified). The choice is always yours: self-hosted for full sovereignty, or managed SaaS for convenience. Either way, your documents stay in your own Google Drive.

Is the data encrypted?

Yes. Google Drive encrypts all files at rest and in transit. Communication between Oncoteam and Google uses HTTPS. No data is transmitted unencrypted.

Who can see my data?

Only you. We have no access to your Google Drive or Gmail. Login uses official Google OAuth — we never see your password or your files. The source code is open-source, so you can verify everything.

Can I remove access?

Anytime. In your Google account settings (myaccount.google.com) you can remove Oncoteam access with one click. Your files remain untouched.

AI Models & Privacy

Does AI learn from my data?

No. Oncoteam uses the Anthropic API (not consumer Claude.ai). Anthropic’s Commercial Terms explicitly state: “Anthropic may not train models on Customer Content from Services.” This is fundamentally different from consumer ChatGPT, which trains on your data by default. Your medical documents are processed in real-time, never stored for training, and auto-deleted within 7 days. Voice notes are transcribed by OpenAI Whisper-1 via API (same no-training guarantee); audio is ephemeral.

Which AI models process my data?

Oncoteam uses two models from Anthropic, both via the commercial API (zero training on your data):

Claude Haiku 4.5 — lightweight model for fast data tasks: document scanning, lab value extraction, medication parsing. Cost: ~$0.80/million tokens.

Claude Sonnet 4.6 — advanced reasoning model for: clinical trial analysis, treatment briefings, dose extraction from handwritten notes, family-friendly summaries. Cost: ~$3/million tokens.

Both models run via Anthropic’s API (SOC 2 Type II, ISO 27001). No consumer-grade models (ChatGPT Free, Claude Free) are ever used.

How long does Anthropic keep my data?

API inputs and outputs are automatically deleted within 7 days — Anthropic reduced this from 30 days on September 15, 2025. This retention is solely for safety monitoring (abuse prevention), not training. Anthropic also offers Zero Data Retention (ZDR) for enterprise / team API customers; Oncoteam currently runs on standard API, which is already sufficient for the no-training and 7-day guarantees. Details: Anthropic Privacy Center. For comparison, consumer ChatGPT retains data indefinitely unless you manually delete it.

Are patient identities anonymized?

Yes. Patient IDs in the system are random 3-character codes (not names or birth dates). No personally identifiable information appears in environment variables, API keys, or code. Each patient gets a dedicated bearer token that isolates their data at the database level.

Infrastructure & Compliance

Where does Oncoteam run?

Oncoteam and Oncofiles run on Railway — a cloud platform with SOC 2 Type II certification (Trust Center). Railway offers data centers across Americas, EMEA, and APAC. HIPAA BAA is available on their Enterprise plan. If you self-host Oncofiles, you choose your own infrastructure entirely.

What security certifications apply?

Anthropic (AI provider): SOC 2 Type I & II, ISO 27001:2022, ISO 42001:2023 (AI Management), HIPAA BAA available. Trust Center.

Railway (hosting): SOC 2 Type II, SOC 3, HIPAA BAA on Enterprise, GDPR DPA available. Trust Center.

Google Drive (document storage): SOC 2, ISO 27001, HIPAA BAA on Workspace. Your files benefit from Google’s enterprise-grade encryption.

How is this different from pasting results into ChatGPT?

Radically different. When you paste lab results into consumer ChatGPT, your data is used for model training by default, retained indefinitely, and has no patient isolation. Oncoteam uses the commercial Anthropic API where training on your data is contractually prohibited, retention is 7 days (for abuse-prevention review only), each patient has isolated access tokens, and the entire system is open-source for verification. It’s the difference between shouting your diagnosis in a crowd vs. talking to your doctor in a private room.

General

Does Oncoteam replace my oncologist?

No. Oncoteam helps you prepare for appointments, understand lab results, and find relevant clinical trials. All treatment decisions should always be made with your oncology team.

Why is the source code open?

Because trust requires transparency. When it comes to your health data, “trust us” isn’t good enough. Every line of code is on GitHub — you (or any developer you trust) can verify exactly what happens with your data. Both Oncoteam and Oncofiles are fully open-source.

Slovak eHealth context

How does Oncoteam relate to OnkoAsist / NCZI?

OnkoAsist is a Slovak national eHealth project run by NCZI. Its scope is the pre-treatment pathway — helping a patient get from first symptom to start of treatment within 60 days instead of 160. Oncoteam covers the opposite half of the journey: from the moment treatment begins, through every chemo cycle, lab result, and trial that might match. Different scope, different user, different cadence. We think it’s a good project and we’re happy to see the state investing in the first half.

Does Oncoteam replace national eHealth systems?

No. Oncoteam is not a national infrastructure or a registered medical device — it’s a patient-and-family advocacy tool. It deliberately stays advisory-only. National systems like eZdravie, the National Oncology Register (NÁrodný onkologický register), and OnkoAsist have legal mandates, eID integration, and inter-provider data flow that a bottom-up tool shouldn’t try to replicate. If any of those systems eventually expose a standards-based API, we’d welcome the chance to feed patient-reported outcomes (symptoms, toxicity, quality-of-life from WhatsApp) back into them.